It's that time of the year again to check on my websites performance using Google Pagespeed Insights. And things could definitely use an upgrade. For those unfamiliar, Pagespeed Insights is a analyzing tool provided by Google that will crawl through your webpages and will recommend improvements in terms of overall performance.

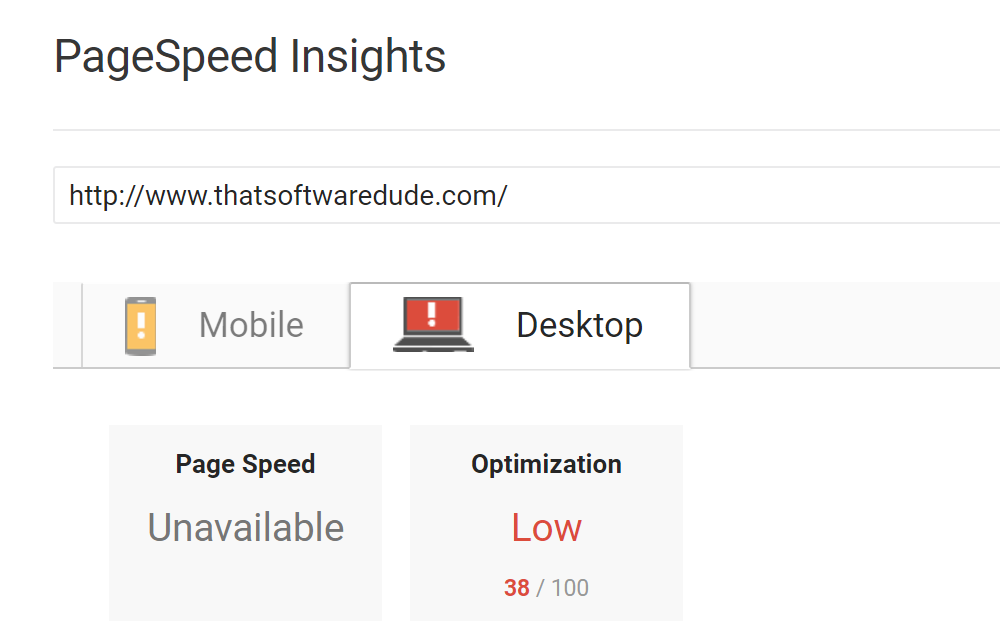

This blog scored below 50 and for some reason it just didn't even bother to check my actual page speed. Thanks Google. Loads fine to me however. But that is no excuse. I ran the PageSpeed Insights test some time ago and thought that I had fixed all of the listed issues. If you are interested in reading about that, head on over here to read about that experience and how I went about correcting the listed issues. The trouble is that everything changes, all the time. And so since then this blog has gone through various redesigns and feature additions, so too has the speed at which it does things. So it's good to, every now and then, revisit old code to refactor and to improve general functionality.

Think of it like an oil change for your code. After some miles, things tend to slow down a bit, so it's good to go back in and do some maintenance.

Optimize images

This process is always challenging, because it is somewhat subjective. If you decrease the file size, you will decrease the quality of the images. So ideally, you want to pick a good balance between both. And while there are 3rd party solutions out there, such as Convert by Image Magick that would take care of this for you, I am on a shared server on GoDaddy, so my ability to install software is limited.

Google does offer the option of downloading a zip folder with all of your images compressed at an ideal file size. With over 400+ blog posts and thousands of images however that is not a suitable option for me and would take a considerable amount of time to implement. .NET does offer many image manipulation libraries and functions that I can implement into my code however, which is the approach that I will end up taking.

As it turns out, I'm also serving some pretty big image files as thumbnails on the homepage. And this is a very easy fix but one that many people overlook. I can create thumbnail images when I upload new images to my website. .NET allows me to do this through it's image manipulation set of classes. I will cover that process in another blog post, just know that through a bit of server-side code you can create any sized image that you'd like dynamically.

Just with the images being optimized a bit more, my score has gone up a tidy sum, although still in the red, at least it shows that we are headed in the right direction.

JPG vs PNG

Looking at some of my thumbnails, they are still pushing relatively large file sizes even though they are tiny in actual visual size and that is because they are currently set as PNG files. I can further reduce the size considerably by converting them to JPG files losing transparency in the process, which in my particular case is just fine as my goal really is to create low resolution thumbnails.

Hidden images

As it turns out, I had a fairly large number of images rendering on to the page that were not being displayed. This was a "solution" that I came up with some time ago in order to achieve a certain effect. The effect being adding an extra 3Mb of images for the client to download.

Enable compression

All modern browsers support and automatically negotiate gzip compression for all HTTP requests. Enabling gzip compression can reduce the size of the transferred response by up to 90%, which can significantly reduce the amount of time to download the resource, reduce data usage for the client, and improve the time to first render of your pages.

If you are running a Windows site on IIS, then adding the following to your web.config will take care of it for you.

<system.webServer>

<staticContent>

<clientCache cacheControlCustom="public" cacheControlMode="UseMaxAge" cacheControlMaxAge="365.00:00:00" />

</staticContent>

</system.webServer>

Minify JavaScript

There are multiple ways to minify JS code on your server. You could build your own minifier, which is the longer, but funner route. Most development environments or frameworks these days however, offer their own minification processes. If you are working on an ASP.NET website for example you can use the built in "Bundler" which will take your resource files and compress them into 1 single file. Very useful, and in this case that is the route that I took.

This did not effect the score overall however, probably due to the minute file size change in the end. But if you are working with larger resource files, then this could definitely save you some space.

Minify CSS

The same approach that I used above with the Bundler can be used here as well.

While this new minified file does much better than the previous version, it could still use some improvement according to Google. Overall, this change did not really increase the page score.

Another route to bypass this issue is to simply inline all of your CSS onto the page. You'll still want to make sure you minify the content to reduce overall page load speed. While this will remove the recommendation, it is important to note that you will have to find a suitable method that works for you in order to do so.

Leverage Browser Caching

Normally we don't want to the browser to download assets on every single page load. And so we want the browser to cache as many static files as possible and to serve those instead in order to improve performance. In most cases this comes down to configuring your server to handle the appropriate caching method. This, however, was not enough for Google yet again. It looks like there is one particular file that abide by the server rules, and that is the following.

Thanks again Google. Searching online, there is no real way to bypass this. Except by hiding analytics from PageSpeed Insights, which isn't a a real solution, however, will yield you the 100 that you are looking for.

Reduce server response time

My current server response time is around .37 seconds, which I agree, is somewhat slow for a simple web page. Again, this comes down to server configuration usually and on the programming framework that you are using as well. PHP for example would probably render faster results to the browser overall as it is a relatively low overhead language. .NET however, is a bit more feature rich, and so handles many more things on the server that will take time to process.

For now, I will leave this as is since I am on a shared server and somewhat limited in what I can configure.

Render blocking content

Google doesn't particularly like it when you load resources that effect above the fold content. Mainly because you have to wait for the resources to download before the content can load, which creates a certain latency effect. There are several ways to go about clearing this, and none of them are really any fun.

One method is to ensure that any above the fold content has its style rules defined inline in the head section. But this is tedious for a few reasons, mainly that it is a super manual process and most websites have massive style sheets that can't easily be broken down.

The quickest solution that you can implement in order to get the checkmark on this one is to minify and inline block any resource files that you may be loading in your head section. Kind of messy, but Google actually recommends inlining your CSS files.

The results are in...

And they are quite significant. Not quite 100, but very close. Going from a 28 to 83 is definitely an improvement. Only time will tell if if will have any effect on my overall traffic or site performance. But it was a fun exercise and definitely allowed me to see a few issues that I had in the way that I was rendering content. My overall page size decreased by over half and my load times did definitely decrease dramatically as well.

If you found this helpful, send me a thumbs up and comment below any tips that you may have in reaching 100.